Interactive Demo

Select one content image and one or more style images from the gallery below. Once you have made your selections, click the Generate button to view your personalized stylized result!

Training-free and Semantic-aware Personalized Style Transfer

from Arbitrary Image References

Select one content image and one or more style images from the gallery below. Once you have made your selections, click the Generate button to view your personalized stylized result!

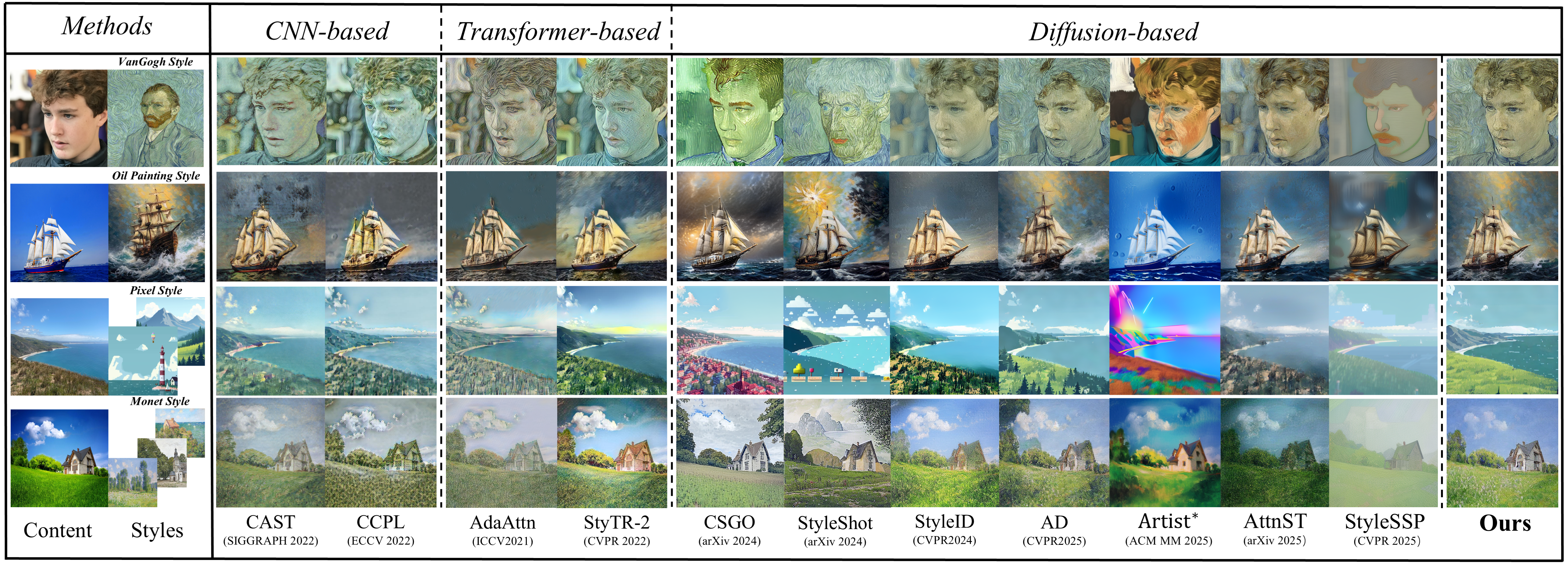

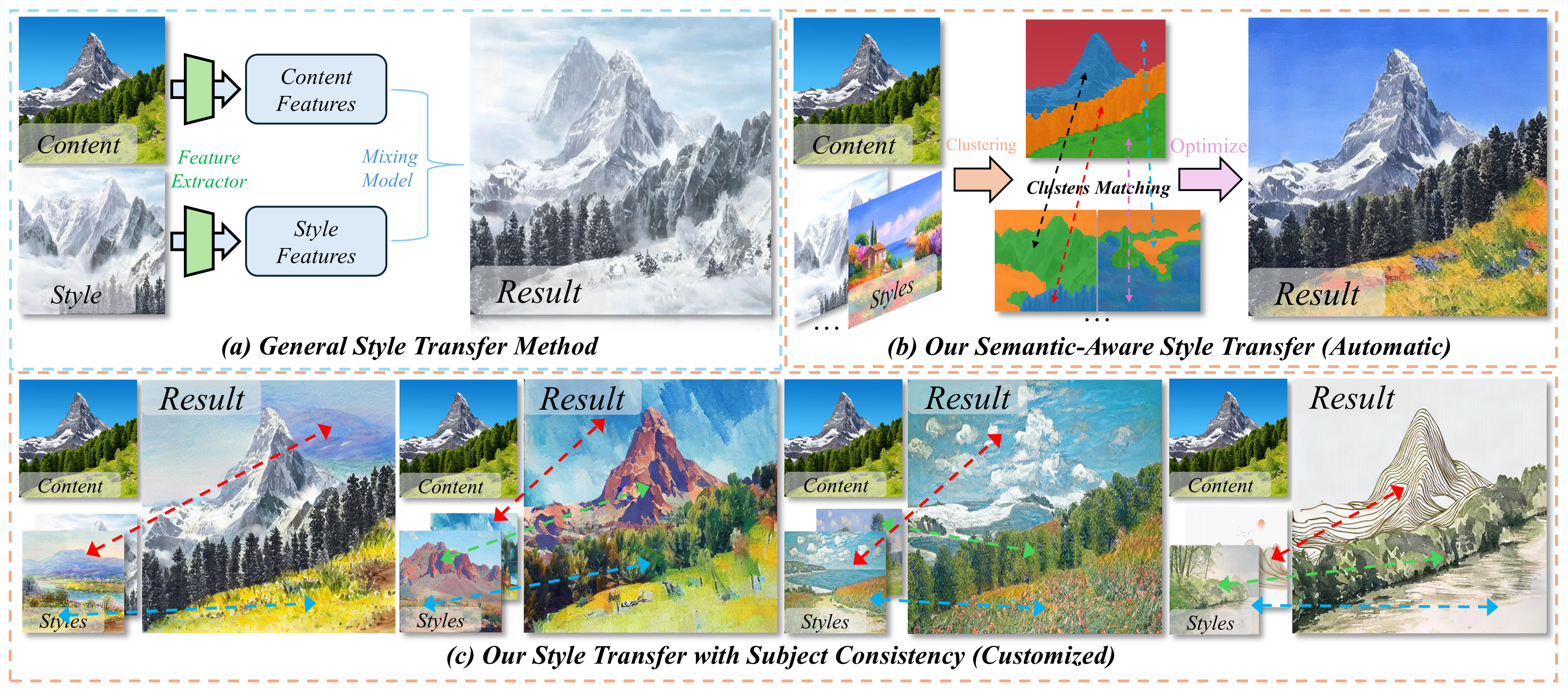

Despite the advancements in diffusion-based image style transfer, existing methods are commonly limited by 1) semantic gap: the style reference could miss proper content semantics, causing uncontrollable stylization; 2) reliance on extra constraints (e.g., semantic masks) restricting applicability; 3) rigid feature associations lacking adaptive global-local alignment, failing to balance fine-grained stylization and global content preservation. These limitations, particularly the inability to flexibly leverage style inputs, fundamentally restrict style transfer in terms of personalization, accuracy, and adaptability. To address these, we propose StyleGallery, a training-free and semantic-aware framework that supports arbitrary reference images as input and enables effective personalized customization. It comprises three core stages: semantic region segmentation (adaptive clustering on latent diffusion features to divide regions without extra inputs); clustered region matching (block filtering on extracted features for precise alignment); and style transfer optimization (energy function–guided diffusion sampling with regional style loss to optimize stylization). Experiments on our introduced benchmark demonstrate that StyleGallery outperforms state-of-the-art methods in content structure preservation, regional stylization, interpretability, and personalized customization, particularly when leveraging multiple style references.

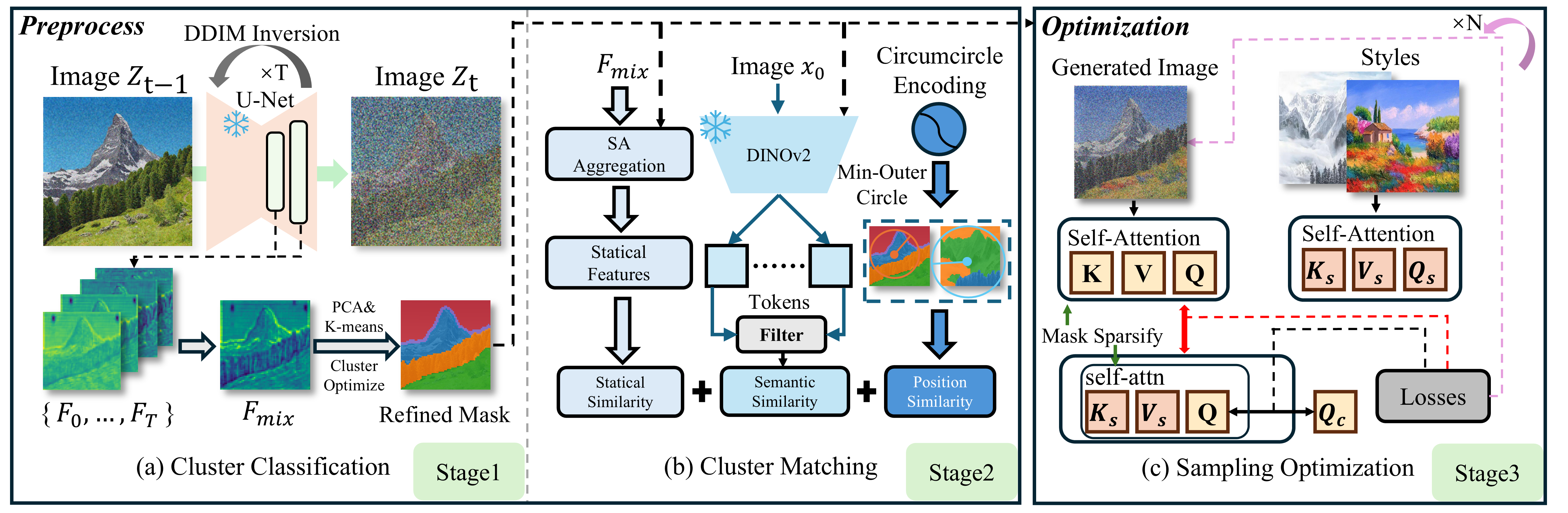

Our pipeline comprises three stages: (a) In stage 1, the content image is diffused for \(T\) steps to extract UNet features \(\{F_0,\dots,F_T\}\), which are weighted into \(F_{mix}\), then clustered via PCA and K-means to optimize the mask. (b) In stage 2, \(F_{mix}\) and the semantic mask identify cluster features, which are aggregated via self-attention for statistical similarity. Meanwhile, DINOv2 splits \(x_0\) into blocks; tokens are filtered for semantic similarity, while cluster positions yield positional similarity. (c) Stage 3 optimizes the generation through \(N\) latent sampling steps. UNet attention maps are extracted, sparsified using the semantic mask, and recombined with style features (\(K_s\), \(V_s\)). L1 distances are then computed between the actual and combined feature maps, as well as the \(Q\) and \(Q_c\). These losses guide the final image generation.